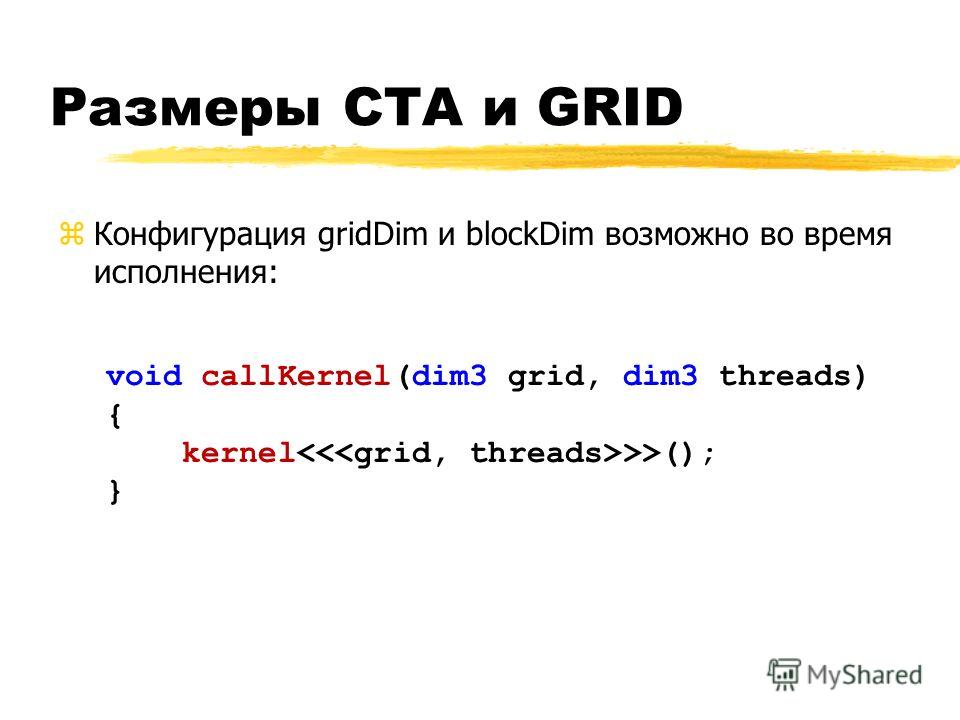

In CUDA we speak of launching a kernel with a grid of thread blocks. In CUDA there is a hierarchy of threads in software which mimics how thread processors are grouped on the GPU. The information between the triple chevrons is the execution configuration, which dictates how many device threads execute the kernel in parallel. The saxpy kernel is launched by the statement: call saxpy>(x_d, y_d, a) While the device-to-host transfer of the result is done by: y = y_d The host-to-device transfers prior to the kernel launch are done by: x_d = x One consequence of the strong typing in Fortran coupled with the presence of the device attribute is that transfers between the host and device can be performed simply by assignment statements. The arrays x_d and y_d could have been declared with the allocatable in addition to the device attribute and allocated with the F90 allocate statement. But while CUDA C declares variables that reside in device memory in a conventional manner and uses CUDA-specific routines to allocate data on the GPU and transfer data between the CPU and GPU, CUDA Fortran uses the device variable attribute to indicate which data reside in device memory and uses conventional means to allocate and transfer data. As with CUDA C, the host and device in CUDA Fortran have separate memory spaces, both of which are managed from host code. The real arrays x and y are the host arrays, declared in the typical fashion, and the x_d and y_d arrays are device arrays declared with the device variable attribute. In the variable declaration section of the code, two sets of arrays are defined: real :: x(N), y(N), a The first is the user-defined module mathOps which contains the saxpy kernel, and the second is the cudafor module which contains the CUDA Fortran definitions. The program testSaxpy uses two modules: use mathOps Let’s begin our discussion of this program with the host code.

The module mathOps above contains the subroutine saxpy, which is the kernel that is performed on the GPU, and the program testSaxpy is the host code. I = blockDim%x * (blockIdx%x - 1) + threadIdx%x The complete SAXPY code is: module mathOpsĪttributes(global) subroutine saxpy(x, y, a) In this post I want to dissect a similar version of SAXPY, explaining in detail what is done and why. SAXPY stands for “Single-precision A*X Plus Y”, and is a good “hello world” example for parallel computation. In a recent post, Mark Harris illustrated Six Ways to SAXPY, which includes a CUDA Fortran version. Keeping this sequence of operations in mind, let’s look at a CUDA Fortran example. Transfer results from the device to the host.Transfer data from the host to the device.Declare and allocate host and device memory.Given the heterogeneous nature of the CUDA programming model, a typical sequence of operations for a CUDA Fortran code is: These kernels are executed by many GPU threads in parallel. Code running on the host manages the memory on both the host and device, and also launches kernels which are subroutines executed on the device. In CUDA, the host refers to the CPU and its memory, while the device refers to the GPU and its memory. The CUDA programming model is a heterogeneous model in which both the CPU and GPU are used. (Those familiar with CUDA C or another interface to CUDA can jump to the next section). CUDA Programming Model Basicsīefore we jump into CUDA Fortran code, those new to CUDA will benefit from a basic description of the CUDA programming model and some of the terminology used.

CUDA Fortran is essentially Fortran with a few extensions that allow one to execute subroutines on the GPU by many threads in parallel. If you are familiar with Fortran but new to CUDA, this series will cover the basic concepts of parallel computing on the CUDA platform. There are a few differences in how CUDA concepts are expressed using Fortran 90 constructs, but the programming model for both CUDA Fortran and CUDA C is the same. If you are familiar with CUDA C, then you are already well on your way to using CUDA Fortran as it is based on the CUDA C runtime API. This post is the first in a series on CUDA Fortran, which is the Fortran interface to the CUDA parallel computing platform.

CUDA Fortran for Scientists and Engineers shows how high-performance application developers can leverage the power of GPUs using Fortran.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed